High Availability Container Resources

Summary

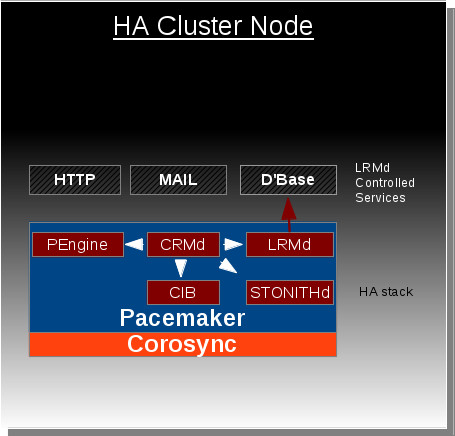

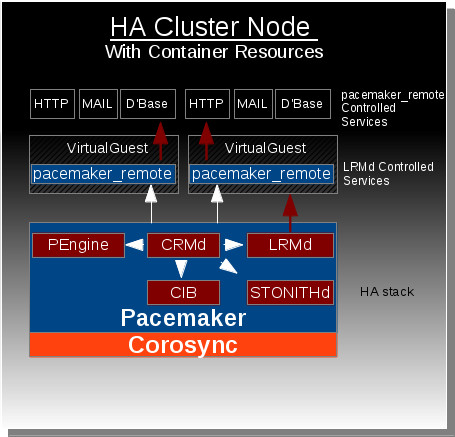

The Container Resource feature allows the HA stack (Pacemaker + Corosync) residing on a host machine to extend management of resources into virtual guest instances (KVM/Linux Containers) through use of the pacemaker_remote service.

Owner

- Name: David Vossel

- Email: <dvossel@redhat.com>

Current status

- Targeted release: Fedora 19

- Last updated: 2013-03-12

- Percentage of completion: 100%

Detailed Description

This feature is in response to the growing desire for high availability functionality to be extended outside of the host into virtual guest instances. Pacemaker is currently capable of managing virtual guests, meaning Pacemaker can start/stop/monitor/migrate virtual guests anywhere in the cluster, but Pacemaker has no ability to manage the resources that live within the virtual guests. At the moment these virtual guests are very much a black box to Pacemaker.

The Container Resources feature changes this by giving Pacemaker the ability to reach into the virtual guests and manage resources in the exact same way resources are managed on the host nodes. Ultimately this gives the HA stack the ability to manage resources across all the nodes in cluster as well as any virtual guests that reside within those cluster nodes. The key component that makes this functionality possible is the new pacemaker_remote service which allows pacemaker to integrate non-cluster nodes (remote-nodes not running corosynce+pacemaker) into the cluster.

Benefit to Fedora

This feature expands our current high availability functionality to span across both physical bare-metal cluster nodes and the virtual environments that reside within those nodes. Without this feature, there is currently no direct approach for achieving this functionality.

Scope

pacemaker_remote:

The pacemaker_remote service is a modified version of Pacemaker's existing LRMD that allows for remote communication over tcp. This service also provides an interface for remote nodes to communicate with the connecting cluster node's internal components (cib, crmd, attrd, stonithd). TLS is used with PSK encryption/authentication to secure the connection between the pacemaker_remote's client and server. Cluster nodes integrate remote-nodes into the cluster by connecting to the remote-node's pacemaker_remote service.

Pengine Container Resource Support:

Pacemaker's policy engine component needs to be able to understand how to represent and manage container resources. This is as simple as the policy engine understanding that container resources are both a resource and a location other resources can be placed after the container resource has started. The policy engine will contain routing information in each resource action to specify which node (whether it be the local host or remote guest) an action should go to. This is how the CRMD will know to route certain actions to a pacemaker_remote instance living on a virtual guest rather than the local LRMD instance living on the host.

CRMD-Routing:

Pacemaker's CRMD component must now be capable of routing LRMD commands to both the local LRMD and pacemaker_remote instances residing on virtual guests.

Defining Linux Container Use-cases:

Part of this project is to identify interesting use-cases for Linux Containers in an HA environment. This project aims at exploring use of libvirt-lxc in HA environments to dynamically define and launch Linux Containers throughout the cluster as well as manage the resources within those containers.

PCS management support for Container Resources:

The pcs management tool for pacemaker needs to support whatever configuration mechanism is settled upon for representing container resources in the CIB.

How To Test

These are the high-level steps required to use this feature with kvm guests using libvirt. It is also possible to use this with libvirt-lxc guests, but since the required steps for getting this to work with linux containers are not as generic as the kvm case, this will focus on solely the kvm case for now.

1. Generate a pacemaker_remote authentication key.

- dd if=/dev/urandom of=/etc/pacemaker/authkey bs=4096 count=1

Place the authkey file in the /etc/pacemaker/ folder on every cluster-node that is capable of running launching a kvm guest.

2. On a pacemaker cluster-node create virtual kvm guest running fedora 19.

- Define a static network address and make sure to set the hostname.

- Install the pacemaker-remote and resoure-agents packages. Set pacemaker-remote to launch on startup (systemclt enable pacemaker_remote).

- Install the authkey created in step 1 in the /etc/pacemaker/ folder.

3. Using pcs on cluster-node, define virtual guest resource using the VirtualDomain agent.

In this example the resource is called 'vm-guest1' which launches the kvm guest created in step 2. After the cluster node launches vm-guest1, it attempts to connect to that node's pacemaker_remote service using the hostname supplied in the remote-node meta-attribute. In this case pacemaker expects to be able to contact the hostname 'guest1' after the 'vm-guest1' resource launches. Once that connection is made, 'guest1' will become visible as a node capable of running resources when viewing the output of crm_mon.

Make sure that the resource id and the remote-node hostname are unique to one another. They cannot be the same. Below is an example of how to configure vm-guest1 as a Container Resource using pcs.

- pcs resource create vm-guest1 VirtualDomain hypervisor="qemu:///system" config="vm-guest1.xml" meta remote-node=guest1

4. Using pcs on host node, define Dummy resource.

- pcs resource create FAKE Dummy

Run crm_mon to see where pacemaker launched the 'FAKE' resource. To force the resource to run on the 'guest1' node run this.

- pcs constraint location FAKE perfers guest1

5. The kvm guest should now be able to run resources as just as if it was a cluster-node.

User Experience

Users will be able to define resources as container resources. Once a container resource is defined, that resource becomes a location capable of running resources just as if it was another cluster node.

Dependencies

No new Pacemaker dependencies will be required for this feature. A list of the current dependencies can be found here. [1]

Contingency Plan

The part of the project surrounding researching and defining use-cases for Linux Containers in an HA environment runs the risk of being incomplete before Fedora 19. The Linux Container support required to achieve this goal is not well understood and may or may not be possible depending on the capabilities of libvirt-lxc and potentially systemd at the time of release. This outcome will not affect the release this feature however as the KVM use-case is well understood.

If this feature is not complete by development freeze, the functionality necessary to configure this feature will be disabled in the stable configuration scheme. The feature is designed in such a way that it should be able to be disabled without without negatively affecting any existing functionality.

Documentation

- Upstream documentation specific to this feature is complete and lives here, Pacemaker Remote Doc

Release Notes

- Pacemaker now supports the ability to manage resources remotely on non-cluster nodes through the use of the pacemaker_remote service. This feature allows pacemaker to manage both virtual guests and the resources that live within the guests all from the host cluster node without requiring the guest nodes to run the cluster stack. View http://clusterlabs.org/doc/en-US/Pacemaker/1.1-pcs/html-single/Pacemaker_Remote for more information.