Introduction

Purpose

The intent of this document is to provide the Open Source and Red Hat communities with a guide to deploy OpenStack infrastructures using Puppet/Foreman system management solution.

We are describing how to deploy and provision the management system itself and how to use it to deploy OpenStack Controller and OpenStack Compute nodes.

Assumptions

- Upstream OpenStack based on Folsom (2012.2) from EPEL6

- The Operating System is Red Hat Enterprise Linux - RHEL6.4+. All machines (Virtual or Physical) have been provisioned with a base RHEL6 system and up to date.

- Using Puppet Labs for Puppet

- The system management is based on Foreman 1.1 from the Foreman Yum Repo

- Foreman provides full system provisioning, meanwhile this is not covered here, at least for now.

- Foreman Smart-proxy runs on the same host as Foreman. Please adjust accordingly if running on a separate host.

Definitions

| Name | Description |

|---|---|

| Host Group | Foreman definition grouping environment, Puppet Classes and variables

together to be inherited by hosts. |

| OpenStack Controller node | Server with all OpenStack modules to manage OpenStack Compute nodes |

| OpenStack Compute node | Server OpenStack Nova Compute and Nova Network modules providing OpenStack Cloud Instances |

| RHEL Core | Base Operation System installed with standard RHEL packages and specific configuration required by all systems (or hosts) |

Architecture

The idea is to have a Management system to be able to quickly deploy OpenStack Controllers or OpenStack Compute nodes.

OpenStack Components

An Openstack Controller Server regroups the following OpenStack modules:

- OpenStack Nova Keystone, the identity server

- OpenStack Nova Glance, the image repository

- OpenStack Nova Scheduler

- OpenStack Nova Horizon, the dashboard

- OpenStack Nova API

- QPID the AMQP Messaging Broker

- Mysql backend

- An OpenStack-Compute

An OpenStack Compute consists of the following modules:

- OpenStack Nova Compute

- OpenStack Nova Network

- OpenStack Nova API

- Libvirt and dependant packages

Environment

The following environment has been tested to validate all the procedures described in this document:

- Management System: both physical or virtual machine

- OpenStack controller: physical machine

- OpenStack compute nodes: several physical machines

High level work-flow

The goal is to achieve the OpenStack deployment in four steps:

- Deploy the system management solution Foreman

- Prepare Foreman for OpenStack

- Deploy the RHEL core definition with Puppet agent on participating OpenStack nodes

- Manage each OpenStack node to be either a Controller or a Compute node

RHEL Core: Common definitions

The Management server itself is based upon the RHEL Core so we define it first.

In the rest of this documentation we assume that every system:

- Is using the latest Red Hat Enterprise Linux version 6.x. We have tested with RHEL6.4.

- Be registered and subscribed with an Red Hat account, either RHN Classic or RHSM. We have tested with RHSM.

- Has been updated with latest packages

- Has the been configured with the following definitions

Time

The NTP service is required and included during the deployment of OpenStack components.

Meanwhile for Puppet to work properly with SSL, all the physical machines must have their clock in sync.

Make sure all the hardware clocks are:

- Using the same time zone

- On time, less than 5 minutes delay from each others

Yum Repositories

Activate the following repositories:

- RHEL6 Server Optional RPMS

- EPEL6

rpm -Uvh http://download.fedoraproject.org/pub/epel/6/x86_64/epel-release-6-8.noarch.rpm yum-config-manager --enable rhel-6-server-optional-rpms yum clean all

We need the Augeas utility for manipulating configuration files:

yum -y install augeas

SELinux

SELinux is a requirement for our projects, meanwhile at the time of writing, SELinux has not been fully validated for:

- Foreman

- OpenStack

In the meantime activate SELinux in permissive mode:

setenforce 0

And make it persistent in /etc/selinux/config file:

SELINUX = permissive SELINUXTYPE=targeted

FQDN

Make sure every host can resolve the Fully Qualified Domain Name of the management server is defined in available DNS or alternatively use the /etc/hosts file.

Puppet Agent

The puppet agent must be installed on every host and be configured in order to:

- Point to the Puppet Master which is our Management server

- Have Puppet plug-ins activated

The following commands make that happen:

PUPPETMASTER="puppet.example.org" yum install -y puppet # Set PuppetServer augtool -s set /files/etc/puppet/puppet.conf/agent/server $PUPPETMASTER # Puppet Plugins augtool -s set /files/etc/puppet/puppet.conf/main/pluginsync true

Afterwards, the /etc/puppet/puppet.conf file should look like this:

[main] # The Puppet log directory. # The default value is '$vardir/log'. logdir = /var/log/puppet # Where Puppet PID files are kept. # The default value is '$vardir/run'. rundir = /var/run/puppet # Where SSL certificates are kept. # The default value is '$confdir/ssl'. ssldir = $vardir/ssl pluginsync=true [agent] # The file in which puppetd stores a list of the classes # associated with the retrieved configuratiion. Can be loaded in # the separate ``puppet`` executable using the ``--loadclasses`` # option. # The default value is '$confdir/classes.txt'. classfile = $vardir/classes.txt # Where puppetd caches the local configuration. An # extension indicating the cache format is added automatically. # The default value is '$confdir/localconfig'. localconfig = $vardir/localconfig server=puppet.example.org

Management Server

Let's get started with how to deploy Puppet-Foreman application suite in order to manage our OpenStack infrastructure.

We describe two installation methods for the Management application:

- Automated Installation: This is the easiest and recommended approach.

- Manual Installation: Walks you through components deployments. Helpful for other OpenStack architecture scenarios and also for troubleshooting.

Automated Installation

The Automated installation of the Management server provides:

- Puppet Master

- HTTPS service with Apache SSL and Passenger

- Foreman Proxy (Smart-proxy) and Foreman

- No SELinux

Before starting, make sure the "RHEL Core: Common definitions" described earlier have been applied.

To get the management suite installed, configured and running, we use puppet itself.

The following commands to be executed on the Management machine:

# Get packages yum install -y puppet git policycoreutils-python # Get foreman-installer modules git clone --recursive https://github.com/theforeman/foreman-installer.git /root/foreman-installer # Install puppet -v --modulepath=/root/foreman-installer -e "include puppet, puppet::server, passenger, foreman_proxy, foreman"

Foreman should then be accessible at https://host1.example.org.

You will be prompted to sign-in: use default user “admin” with the password “changeme”.

Optional Mysql Backend

Let's get the DBMS and active the service by default:

yum install -y mysql-server chkconfig mysqld on service mysqld start

Then we initialise the mysql database:

MYSQL_ADMIN_PASSWD='mysql'

/usr/bin/mysqladmin -u root password "${MYSQL_ADMIN_PASSWD}"

/usr/bin/mysqladmin -u root -h $(hostname) password "${MYSQL_ADMIN_PASSWD}"

Puppet database

We need to create a Puppet database and grant permission to it's user, “puppet”:

The following command will do that for us.

MYSQL_PUPPET_PASSWD='puppet' echo "create database puppet; GRANT ALL PRIVILEGES ON puppet.* TO puppet@localhost IDENTIFIED BY '$MYSQL_PUPPET_PASSWD'; commit;" | mysql -u root -p

Finally we adjust the /etc/puppet/puppet.conf file for mysql.

augtool -s set /files/etc/puppet/puppet.conf/master/storeconfigs true augtool -s set /files/etc/puppet/puppet.conf/master/dbadapter mysql augtool -s set /files/etc/puppet/puppet.conf/master/dbname puppet augtool -s set /files/etc/puppet/puppet.conf/master/dbuser puppet augtool -s set /files/etc/puppet/puppet.conf/master/dbpassword \ $MYSQL_PUPPET_PASSWD augtool -s set /files/etc/puppet/puppet.conf/master/dbserver localhost augtool -s set /files/etc/puppet/puppet.conf/master/dbsocket \ /var/lib/mysql/mysql.sock

Foreman database

First off we need the mysql gems for foreman:

yum -y install foreman-mysql*

We need to configure foreman to make good use of our Mysql Puppet database.

Modify the /etc/foreman/database.yml file so the production section looks like this:

production: adapter: mysql2 database: puppet username: puppet password: puppet host: localhost socket: "/var/lib/mysql/mysql.sock"

And then foreman to populate the database:

cd /usr/share/foreman && RAILS_ENV=production rake db:migrate

Mysql Optimisation

For optimisation, the following which is optional, should be done only once puppet database has been created and populated.

Run the following create index command, you'll be prompted for the MYSQL_PUPPET_PASSWD password specified earlier:

echo “create index exported_restype_title on resources (exported, restype, title(50));” | mysql -u root -p -D puppet

Set-up Foreman

Foreman needs to be configured according to our needs. We need to:

- Setup the smart-proxy

- Define globals variables

- Download the Puppet Modules

- Declare the hostgroups

Smart-Proxy

Once Foreman-proxy and Foreman services are up and running, we need to link them together.

foreman-setup proxy

Import OpenStack Puppet Modules

We need to download the Opentstack Puppet modules from the github project. All the OpenStack components are installed from those modules:

git clone --recursive https://github.com/gildub/puppet-openstack.git /etc/puppet/modules/production

Along with the nova-compute and nova-controller installer:

git clone https://bitbucket.org/gildub/trystack.git /etc/puppet/modules/production/trystack

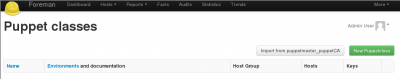

We import the Puppet modules into Foreman using either:

- The GUI: Select “More -> Configuration -> Puppet classes” and click “Import from <your_smart_proxy>” button:

- Command line:

cd /usr/share/foreman && rake puppet:import:puppet_classes RAILS_ENV=production

Parameters

We provide all the parameters required by the OpenStack puppet modules in order to configure the different components with those values.

foreman-setup globals

Hosts Groups

Host Groups are an easy way to group Puppet class modules and parameters. A host, when attached to a Host Group automatically inherits those definitions. We manage the two OpenStack types of server using Foreman Host Groups.

So, we need to create two Host Groups:

- OpenStack-Controller

- OpenStack Compute Nodes

foreman-setup hostgroups

Manage a Host

To make a system part of our OpenStack infrastructure we have to:

- Make sure the host follows the Common Core definitions – See RHEL Core: Common definitions section above

- Have the host's certificate signed so it's registered with the Management server

- Assign the host either the openstack-controller or openstack-compute Host Group

Register Host Certificates

Using Autosign

With autosign option, the hosts can be automatically registered and visible from Foreman by adding the hostnames to the /etc/puppet/autosign.conf file.

Signing Certificates

If you're not using the autosign option then you will have to sign the host certificate, using either:

- Foreman GUI

Get on the Smart Proxies window from the menu "More -> Configuration -> Smart Proxies". And select the "Certificates" from the drop-down button of the smart-proxy you created:

From there you can manage all the hosts certificates and get them signed.

- The Command Line Interface

Assuming the Puppet agent (puppetd) is running on the host, the host certificate would have been created on the Puppet Master and will be waiting to be signed: From the Puppet Master host, use the “puppetca” tool with the command “list” to see the waiting certificates, for example:

# puppetca list "host3.example.org" (84:AE:80:D2:8C:F5:15:76:0A:1A:4C:19:A9:B6:C1:11)

To sign a certificate, use the “sign” command and provide the hostame, for example:

puppetca sign host3.example.org

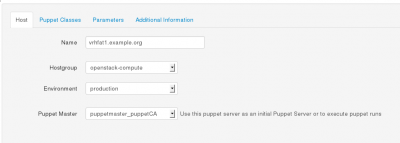

Assign a Host Group

Display the hosts using the “Hosts” button at the top Foreman GUI screen.

Then select the corresponding “Edit Host” drop-down button on the right side of the targeted host.

Assign the right environment and attach the appropriate Host Group to that host in order to make it a Controller or a Compute node.

Save by hitting the “Submit” button.

Deploy OpenStack Components

We are done!

The OpenStack components will be installed when the Puppet agent synchronises with the Management server. Effectively, the classes will be applied when the agent retrieves the catalog from the Master and runs it.

You can also manually trigger the agent to check with the puppetmaster, to do so deactivate the agent on the targeted controller node run:

service puppet stop

And run it manually:

puppet agent –verbose --no-daemonize